Although I am beginning the setup with a single xServe, I intend to add another in the very near future - this would eliminate another (rather glaring) point of failure and distribute workloads. The xServe(s), behind a router or on a public IP, would provide the services/security needed by their contact to the outside world these in turn would "collect" filesystems from a LAN of (lower-cost) clustered "server/storage" blocks that could be added at will. Yes, at the outset it was a blast - my idea was to divide the service between two levels: secure "head" servers serving files from clustered "disk-exporting" servers. My only goal is to have the posix-compliant filesystems on the subservers to be accessible/readable from the "head" xServe in a "mirrored" fashion.Īgain, thank you for your insightful input. I do have a shared write system set up, but this is on a secondary "background" level (hierarchy-locked accounts are managed by crushFTP from the Leopard xServe). I may one day like to replicate/distribute services/payload, but that will be a later secondary development. Should I also mention that the subservers are in amd64 Debian Etch - but this is a moot point here. It's not about the services the servers will provide it's the storage space they mount. I would like either to write to two (mirrored) mounts simultaniously (glusterfs-ish) or write to an active node "master" server that would then replicate to its twin. I'll definitely look into the I/O angle, more SAN than NAS though - perhaps I should provide a bit more detail?īasically each server I want to cluster is directly SATA2-connected to two 12-terra xfs-formatted RAID 5 blocks. I have total control over the whole network, so no host support. At the least, can you tell me whether I'm seeking the impossible? Yep, I'm shooting in the dark here for any suggestions at all. The only other solution I can think of is having two Linux servers syncing between them, and having only one of them exporting to leopard as an NFS mount. There is no macFuse-xfs port to my knowledge.ĭRBD was another possibility, but I don't see how this could be transformed/exported as an NFS mount (thus being readable by Leopard). Glusterfs (using macFuse) is the closest I have come to a solution, but unfortunately this means that the destination storage (behind the Linux servers) must be read/writable by a Leopard that only "understands" a limited amount of filesystems - xfs not being one of them. Yet I am becoming increasingly persuaded that this is not possible - and even confirmation that it isn't would be quite helpful. What I would like to do is have a cluster (of two Linux servers) appear as a single (shared) NFS mount in the Leopard xServe. My problem probably lies between my own misunderstanding of clusters and Leopard's file sharing capabilities. Implementing this stuff on Mac OS X Server would be a blast. (I've heard this most recently as a primary and some number of secondary or standby servers, depending on how this is running.) And that then gets into distributed coordination some form of lock management.

The concept of "head" and "subserver" is an interesting one that's a simple idea that tends to get ugly in a distributed configuration, particularly when random failures are inserted or expected. The next step up the stack here involves MySQL or another other similar database-level replication mechanism. Shared write and full failover and such gets a little more involved. There are some similar approaches here search for rsync. The lower-end approach toward this (eg: cheap, inelegant, manually assembled and often sufficiently functional) is something akin to rsync. (The Promise NAS gear may well be able to do this. Xsan and Xgrid is the closest to this with the Apple gear, unless you go outboard with a NAS or SAN controller.

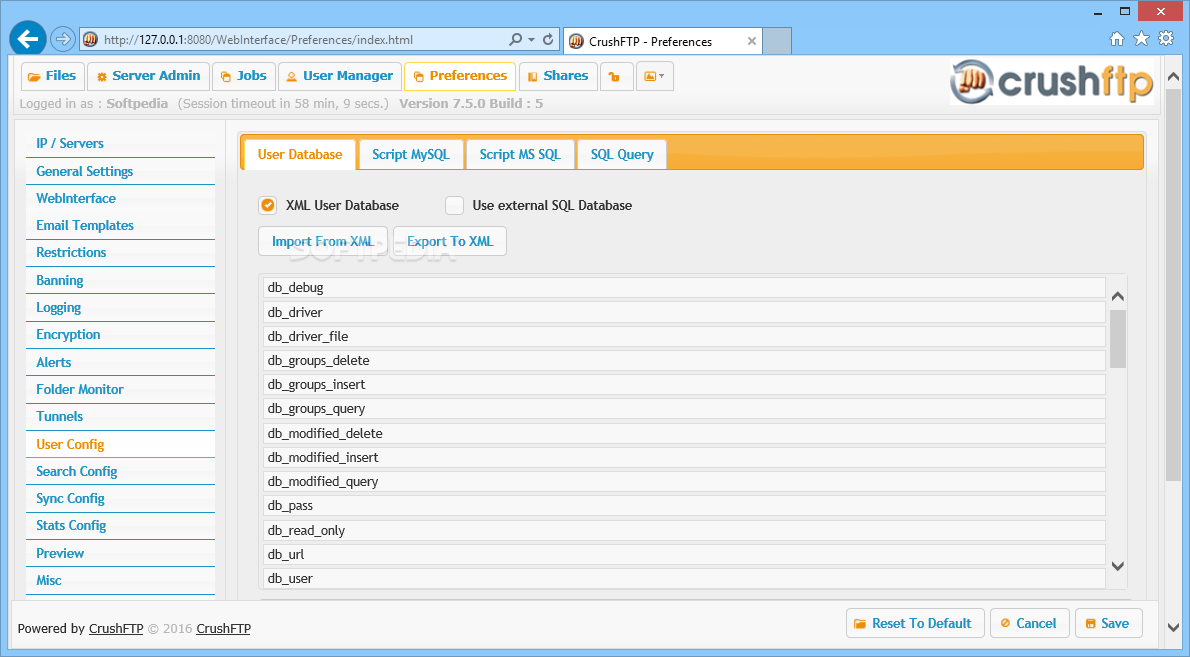

Intelligent virtual SAN controllers (and a few NAS controllers) can deal with (most of) this replication underneath the view of the host operating system. The difference between SAN and NAS is centrally one of bit error rates SAN gear has lower bit error rates and higher speeds, and NAS has higher error rates and lower speeds. The difference here is that it's RAID1 across multiple hosts and controllers, targeting up to three disks. With OpenVMS, this is known as host-based volume shadowing. NET assembly.I've done exactly this with OpenVMS clusters (fully distributed shared-write within disks and within files), but I've not seen this implemented with other boxes. In this case it'd be called as an FTP SITE command.Ĭustom commands can be added via embedded Javascript or via a. Custom commands can be called from any protocol (FTP, SFTP, SSH, HTTP). Our product, CompleteFTP, allows you to add custom commands.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed